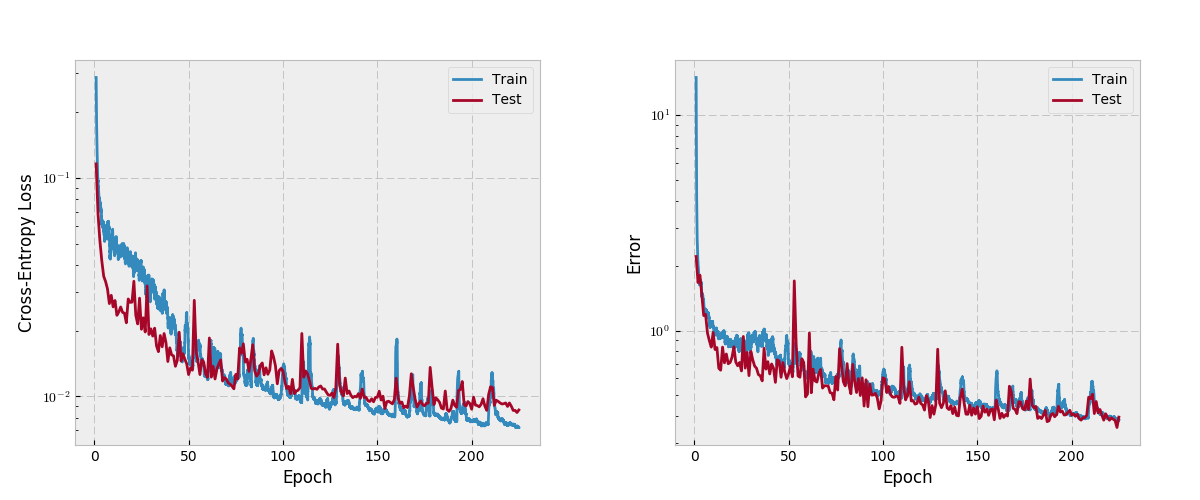

> target = torch.empty(3, dtype=torch.long). > input = torch.randn(3, 5, requires_grad=True) , d_K) with K ≥ 1 K \geq 1 in the case of K-dimensional loss.Įxamples: > loss = nn.CrossEntropyLoss() torch.nn.functional.crossentropy PyTorch 1.12 documentation torch.nn.functional.crossentropy torch.nn.functional.crossentropy(input, target, weightNone, sizeaverageNone, ignoreindex- 100, reduceNone, reduction'mean', labelsmoothing0.0) source This criterion computes the cross entropy loss between input and target. If reduction is 'none', then the same size as the target: ( N ) (N), or ( N, d 1, d 2. , d_K) with K ≥ 1 K \geq 1 in the case of K-dimensional loss. Let’s see what happens when cross-entropy loss is used.

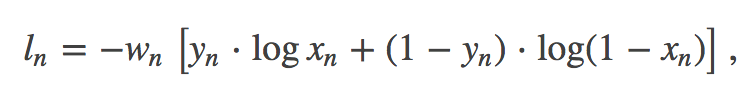

Built with Sphinx using a theme provided by Read the Docs. Loss = ∑ i = 1 N l o s s ( i, c l a s s ) ∑ i = 1 N w e i g h t ] \text \leq C-1, or ( N, d 1, d 2. Tensor Examples: > input torch.randn(3, requiresgradTrue) > target torch.empty(3).random(2) > loss F.binarycrossentropywithlogits(input, target) > loss.backward() Next Previous Copyright 2022, PyTorch Contributors. It just so happens that the derivative of the. This criterion expects a class index in the range as the target for each value of a 1D tensor of size minibatch if ignore_index is specified, this criterion also accepts this class index (this index may not necessarily be in the class range). torch.nn.lloss is like crossentropy but takes log-probabilities (log-softmax) values as inputs And here a quick demonstration: Note the main reason why PyTorch merges the logsoftmax with the cross-entropy loss calculation in torch.nn.functional.crossentropy is numerical stability. , d_K) with K ≥ 1 K \geq 1 for the K-dimensional case (described later). Input has to be a Tensor of size either ( m i n i b a t c h, C ) (minibatch, C) or ( m i n i b a t c h, C, d 1, d 2. The input is expected to contain raw, unnormalized scores for each class. The problem that I am having is that the model outputs a vector of size (batchsize classes, 1) when it is supposed to output a (batchsize, 1) vector. I am using nn.CrossEntropyLoss () in as my optimizer in a model that I am developing. This is particularly useful when you have an unbalanced training set. model with CrossEntropyLoss optimizer doesnt apply softmax pytorch. If provided, the optional argument weight should be a 1D Tensor assigning weight to each of the classes. target ( Tensor) Ground truth class indices or class probabilities see. Public Member Functions Public Attributes List of all members. input ( Tensor) Predicted unnormalized scores (often referred to as logits) see Shape section below for supported shapes. The related torch module is torch.nn.CrossEntropyLoss, but it can not be. This criterion computes the cross entropy loss between input and target. It is useful when training a classification problem with C classes. The cross entropy loss that evaluates the cross entropy after softmax output. This criterion combines nn.LogSoftmax() and nn.NLLLoss() in one single class. CrossEntropyLoss class torch.nn.CrossEntropyLoss(weight: Optional = None, size_average=None, ignore_index: int = -100, reduce=None, reduction: str = 'mean')

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed